The Reddit Research Method: How to Validate Any SaaS Idea in 48 Hours

A step by step framework for using Reddit data to validate SaaS ideas in 48 hours or less. Includes the exact process used to analyze 8,000+ threads, score pain signals, map competitors, and determine if an idea is worth building.

Most SaaS validation advice is either too slow or too shallow. "Do 50 customer interviews" takes weeks. "Search Product Hunt" gives you competitors, not demand signals. "Post a landing page" tests your marketing skills, not your idea.

The Reddit Research Method sits in the middle: deep enough to surface real patterns, fast enough to complete in a single weekend. I developed it while building ValidSaaS after my own SaaS idea failed spectacularly. I had built an entire CRM for UK care agencies based on an assumption that turned out to be wrong. Two phone calls would have saved me months of work.

Since then, I have refined this method across 8,000+ Reddit threads and 300,000+ comments. Every SaaS builder, indie hacker, solopreneur, or vibe coder can use it. You do not need to code. You do not need a budget. You need 48 hours and a structured approach.

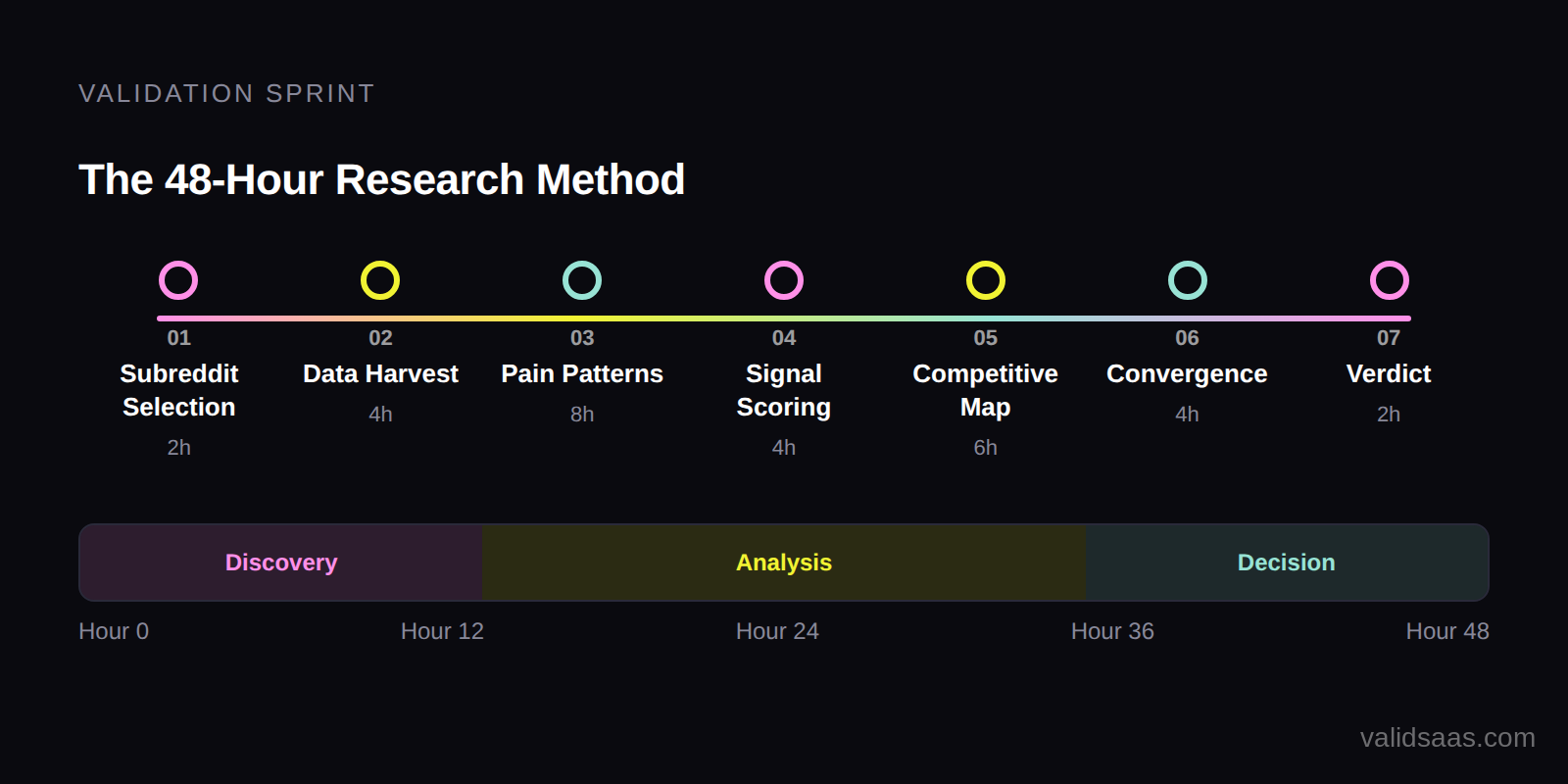

The 48 Hour Timeline

Here is how the time breaks down. This is not aspirational. This is based on how long each step actually takes when done systematically.

| Hour Block | Activity | Output |

|---|---|---|

| Hours 1 to 4 | Subreddit selection and initial data harvest | 100 to 200 posts collected across 2 to 3 subreddits |

| Hours 5 to 10 | Pain pattern identification and categorization | 5 to 10 pain categories ranked by frequency |

| Hours 11 to 16 | Signal scoring (three tier framework) | Each pain point scored by reliability |

| Hours 17 to 24 | Competitive landscape mapping | Competitor list with pricing, features, and gaps |

| Hours 25 to 32 | Cross community convergence testing | Validation across independent subreddits |

| Hours 33 to 40 | Opportunity scoring and ranking | Final opportunity score card |

| Hours 41 to 48 | Decision: build, pivot, or kill | Clear go/no go verdict |

If you use a tool like ValidSaaS to automate the scraping and initial analysis, hours 1 to 10 compress to about 30 minutes per subreddit, which frees up time for the deeper analytical work.

Phase 1: Selecting Your Target Subreddits (Hours 1 to 2)

The subreddits you choose determine the quality of your data. Pick wrong, and you waste 48 hours on noise.

The Three Subreddit Strategy

Always research across three types of subreddits:

Type A: Broad SaaS/Startup Community. r/SaaS, r/Entrepreneur, r/startups, or r/microsaas. These show macro patterns and trending pain points. They tell you what the broader market cares about.

Type B: Your Target Audience Community. If you are building for freelancers, go to r/freelance. For accountants, r/accounting. For developers, r/webdev. These show domain specific pain in the language your customers use.

Type C: An Adjacent Community. A community that overlaps with your target audience but approaches problems from a different angle. If your Type B is r/freelance, your Type C might be r/smallbusiness or r/Entrepreneur. The convergence between B and C is where the highest confidence signals live.

| Subreddit Type | Purpose | Example | What to Harvest |

|---|---|---|---|

| Type A: Broad | Macro patterns, trending pain | r/SaaS | Top 50 posts from last 30 days |

| Type B: Target audience | Domain specific pain language | r/freelance | Top 50 posts from last 30 days |

| Type C: Adjacent | Cross community validation | r/smallbusiness | Top 50 posts from last 30 days |

For a detailed breakdown of which subreddits produce the best signals, read How to Find SaaS Ideas on Reddit: 7 Subreddits Where Users Share Real Pain Points.

How to Find Your Type B and Type C Subreddits

Picking your Type A is easy (r/SaaS, r/Entrepreneur). The harder question is finding the right Type B and Type C communities for your specific niche. Here are three methods that work:

1. Map of Reddit. Map of Reddit is a free interactive visualization built from 176 million Reddit comments. Every subreddit is a dot on the map, and communities that share the most users are clustered together. Search for your Type B subreddit, click "Show Related," and you will see first and second degree connections. This is the fastest way to discover niche communities you did not know existed.

2. Ask an AI. Open Gemini, ChatGPT, or Claude and prompt: "I am building a SaaS for [your audience]. What Reddit communities would this audience be active in? Include niche subreddits between 50K and 500K members." AI models are trained on Reddit data and are surprisingly good at surfacing non obvious communities. Use this as a brainstorm, then verify each suggestion with Map of Reddit.

3. Reddit's native search. Search your topic on Reddit and click the "Communities" tab. This surfaces subreddits by keyword relevance. It is the simplest method but misses communities that discuss the problem using different vocabulary.

Combine at least two of these methods. The goal is to find 1 Type B and 1 Type C subreddit where your target audience congregates but approaches problems from a different angle.

Phase 2: Harvesting the Data (Hours 2 to 4)

Pull the top 50 to 100 posts from each subreddit for the last 30 days. Include all comments. The comments are where the real gold is because that is where people elaborate, argue, and share specific details about their problems.

What to capture for each post:

- Post title and body text

- All comments and nested replies

- Upvote counts on both posts and comments

- The subreddit it came from (for convergence testing later)

- Any tool names mentioned (for competitive mapping)

Do not cherry pick. You need volume to see patterns. If you only read the posts that look interesting, you will fall prey to confirmation bias. Pull everything and let the data tell you what matters.

The Manual Way (Free, Slow, But It Works)

Here is exactly how to do this by hand, step by step. I am showing you this because I genuinely believe you should understand the process before deciding whether to automate it.

Step 1: Get the raw data using Reddit's JSON trick. Reddit exposes every page as structured data. Add .json to the end of any Reddit URL and you get the raw post and comment data. For example:

reddit.com/r/SaaS/top/.json?t=month&limit=100returns the top 100 posts from r/SaaS in the last monthreddit.com/r/SaaS/comments/[post_id]/.jsonreturns a single post with all its comments

Open those URLs in your browser. You will see raw JSON. Copy it into a file. Do this for each of your 3 target subreddits. Then for each post that looks interesting, grab the comments JSON too.

Step 2: Organize into a spreadsheet. Create columns: Post Title, Subreddit, Upvotes, Comment Count, Key Quotes, Pain Category, Signal Tier. Read through each post and its comments manually. Copy relevant quotes. Categorize each pain point. Score each signal using the three tier framework.

Step 3: This is where most people try to shortcut with ChatGPT or Gemini. And this is where it breaks down.

You might think: "I will just paste all this data into ChatGPT or Gemini and ask it to find patterns." I tried this. Here is what actually happens:

| What You Expect | What Actually Happens |

|---|---|

| AI reads all 100 posts and identifies patterns | AI summarizes the first 10 to 20 posts well, then quality drops sharply |

| Nuanced pain point categorization | Generic categories like "marketing challenges" that miss the specifics |

| Accurate signal scoring | AI cannot reliably distinguish Tier 1 from Tier 3 without domain context |

| Cross referencing across subreddits | Token limits force you to analyze each subreddit separately, losing the convergence signal |

The problem is not intelligence. ChatGPT and Gemini are brilliant tools. The problem is context window economics. 100 Reddit posts with full comment threads is roughly 500,000 to 1,000,000 tokens of text. Even models with 1M+ token context windows experience significant quality degradation when processing that volume. Google's own research shows that LLM performance drops on tasks requiring recall from the middle of long contexts. The information in post 47 gets lost in the noise of posts 1 through 100.

I spent weeks trying to make raw LLM analysis work before realizing the approach needed to be fundamentally different. You cannot just throw 300,000 comments at a single prompt and expect reliable patterns.

The manual spreadsheet method works if you have 20 to 40 hours per subreddit. Many successful founders have validated ideas this way. If you have more time than money, do it manually. You will learn a lot in the process.

When Manual Is Not Enough

If you are evaluating multiple ideas (you should be testing at least 5, see SaaS Idea Kill Criteria), the math stops working. 5 ideas x 3 subreddits each x 20 hours per subreddit = 300 hours of research before you write a single line of code.

This is the problem ValidSaaS was built to solve. Not by throwing data at a generic LLM, but through a purpose built analysis pipeline that processes each post and comment individually, scores signals with domain specific context, and then synthesizes patterns across the full dataset without the quality loss you get from stuffing everything into one prompt.

The output is the same research you would do manually: pain categories, signal scores, competitive mentions, convergence data. The difference is that 100 posts with full comments takes under 30 minutes instead of 20 to 40 hours. And because the analysis is structured rather than freeform, it catches patterns buried in comment thread 87 that your eyes would glaze over after hour 6.

If you do not want to do any of this yourself, there is also our Done For You service where we run the research, analysis, and strategic interpretation for you and deliver a complete validation report.

Phase 3: Pain Pattern Identification (Hours 5 to 10)

This is where most people fail. They read through threads and latch onto the first interesting complaint they see. That is anecdote driven research, not pattern driven research.

The Categorization Process

Go through every post and comment. For each complaint, feature request, or frustration expressed, assign it to a category. Do not pre define categories. Let them emerge from the data.

When I did this with 100 posts from r/SaaS, the categories that emerged were:

| Pain Category | Thread Count (out of 100) | Percentage |

|---|---|---|

| Lead generation and customer acquisition | 43 | 43% |

| Idea validation | 18 | 18% |

| Pricing and monetization | 12 | 12% |

| Churn and retention | 9 | 9% |

| Technical architecture | 8 | 8% |

| Hiring and team building | 6 | 6% |

| Legal and compliance | 4 | 4% |

I did not go in looking for lead generation. It surfaced because 43 out of 100 threads organically revolved around it. That is the power of pattern driven research: it shows you what the market actually cares about, not what you assume it cares about.

What Counts as a Pattern

A single post is an anecdote. Five posts describing the same problem in different words is a signal. Twenty posts is a validated pain point. The threshold I use:

- 1 to 4 threads: Interesting but not enough to act on

- 5 to 9 threads: Worth investigating further

- 10 to 19 threads: Strong signal, validate with competitive analysis

- 20+ threads: High confidence opportunity, prioritize

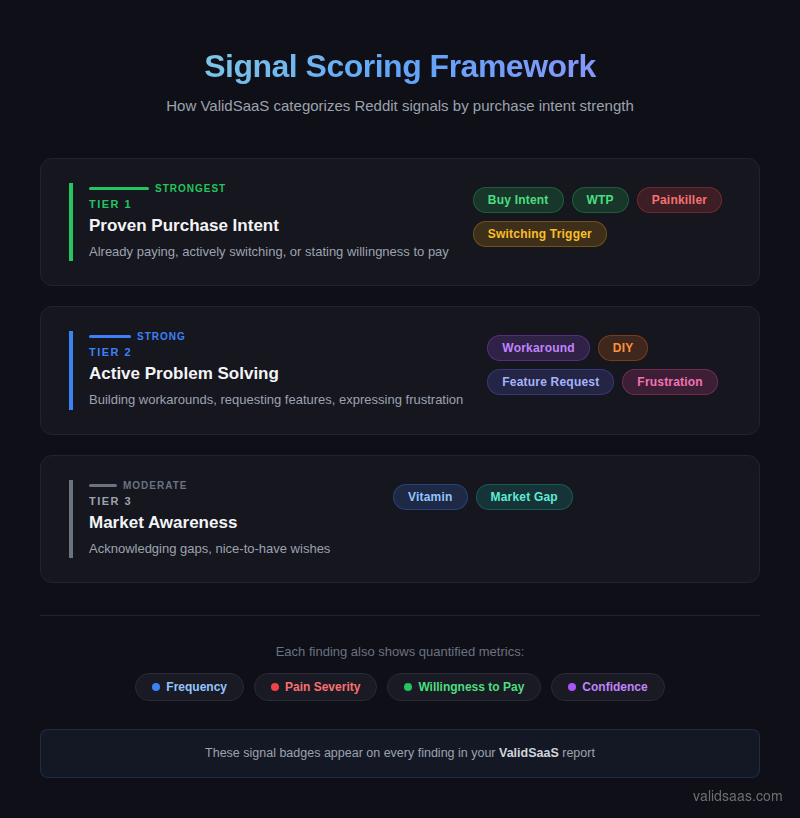

Phase 4: Signal Scoring (Hours 11 to 16)

Not every complaint is worth building for. Some people vent online but would never pay. Others describe pain that is annoying but not painful enough to justify a subscription. You need a framework for scoring signal quality.

I use a three tier framework developed from analyzing 300,000+ comments. ValidSaaS automatically applies these signal badges to every finding in your report, so you can see exactly which tier each data point falls into:

Tier 1: Proven Purchase Intent (Strongest Signal). These are comments where someone is already paying for a broken solution, actively switching tools, or explicitly stating willingness to pay. "I pay $99/mo for X and it still cannot do Y." ValidSaaS flags these with Buy Intent, WTP, Painkiller, and Switching Trigger badges. This is the strongest signal because willingness to pay is proven.

Tier 2: Active Problem Solving (Strong Signal). The commenter is building workarounds, requesting specific features, or expressing deep frustration with quantified pain. "I spend 15 hours a week doing this manually." ValidSaaS flags these with Workaround, DIY, Feature Request, and Frustration badges. When people invest effort into solving a problem themselves, they will usually pay for a better solution.

Tier 3: Market Awareness (Moderate Signal). General acknowledgment that a gap exists or a nice to have wish. "It would be cool if someone built X." ValidSaaS flags these with Vitamin and Market Gap badges. High volume, but lower conversion. These still matter for sizing the market, just do not build based on Tier 3 signals alone.

For each pain category from Phase 3, go through the relevant threads and count how many Tier 1, Tier 2, and Tier 3 signals you find.

| Pain Category | Tier 1 Count | Tier 2 Count | Tier 3 Count | Score |

|---|---|---|---|---|

| Lead generation | 12 | 18 | 13 | Very Strong |

| Idea validation | 3 | 8 | 7 | Strong |

| Pricing/monetization | 2 | 4 | 6 | Moderate |

| Churn/retention | 4 | 3 | 2 | Strong |

A pain category with 10+ Tier 1 signals is a near certain opportunity. A category with mostly Tier 3 signals is probably not worth pursuing, regardless of how many threads mention it.

Try It Yourself

ValidSaaS scrapes real Reddit conversations and surfaces pain points, demand signals, and opportunities you can actually build on. Start with 2 free harvests.

Phase 5: Competitive Landscape Mapping (Hours 17 to 24)

Once you have identified your top 2 to 3 pain categories, it is time to map who else is trying to solve them. This is not optional. If you skip competitive analysis, you are flying blind.

How to Find Competitors Using Reddit

The fastest way to find competitors is Method 4 from the original research framework: search Reddit for tool names mentioned in the threads you have already collected.

When users complain about a problem, they often mention tools they have tried. "I used X but it does not do Y." "Has anyone tried Z? It is $49/mo but I am not sure it is worth it." Each mention is a competitor to investigate.

What to Document About Each Competitor

| Data Point | Why It Matters | Where to Find It |

|---|---|---|

| Pricing tiers | Shows willingness to pay ceiling and floor | Competitor website pricing page |

| Core features | Shows what the market considers table stakes | Competitor website, G2, Capterra |

| User complaints | Shows gaps you can fill | Reddit threads, G2 1 to 3 star reviews |

| Founding date | Shows market maturity | Crunchbase, LinkedIn |

| Team size | Shows how much effort is needed to compete | LinkedIn, About page |

| Monthly traffic | Shows market awareness | SimilarWeb free tier |

The Gap Matrix

Create a simple matrix of competitor features vs. user complaints:

| User Want | Competitor A | Competitor B | Competitor C | Gap? |

|---|---|---|---|---|

| Feature X | Yes | No | No | Partial |

| Feature Y | No | Yes | No | Partial |

| Price under $30/mo | No ($49) | No ($79) | Yes ($19) | Yes (quality issue) |

| Easy setup (under 5 min) | No | No | No | Yes (wide open) |

Every row where the Gap column says "Yes" is a potential differentiator for your product. When I did this analysis for Reddit lead generation tools, I found 8 competitors and 4 major unsolved gaps. For a complete framework on competitive analysis, see SaaS Competitor Analysis Framework.

Phase 6: Cross Community Convergence (Hours 25 to 32)

This is the step most people skip, and it is the step that separates reliable validation from echo chamber effects.

One subreddit can have groupthink. If r/SaaS is obsessed with lead generation, that might be because a few viral posts created a feedback loop. But if r/Entrepreneur, r/microsaas, AND r/smallbusiness all independently surface the same pain, that is convergence. And convergence is the strongest form of validation you can get from online research.

How to Test for Convergence

Take your top pain categories from Phase 3 and search for the same themes in your Type C (adjacent) subreddit. You are looking for:

- Same problem, different words. r/SaaS might say "lead generation." r/smallbusiness might say "finding new customers." Same pain, different vocabulary.

- Same frustration, different context. A developer in r/webdev frustrated with client management is the same signal as a designer in r/graphic_design frustrated with client management.

- Independent occurrence. The threads should not be cross posted or referencing each other. They should describe the problem independently.

If your pain point converges across 2+ communities, your confidence level should increase dramatically.

Phase 7: The Verdict (Hours 33 to 48)

At this point, you have:

- 100 to 200 posts analyzed

- Pain categories ranked by frequency

- Signals scored by tier

- Competitors mapped with gaps identified

- Convergence tested across communities

Now it is time to make a decision.

The Opportunity Score Card

| Criteria | Score (1 to 5) | Weight | Notes |

|---|---|---|---|

| Pain frequency (10+ threads) | 3x | How often does this pain appear | |

| Signal quality (Tier 1 and 2 count) | 4x | Are people paying or quantifying pain | |

| Competitive gaps | 3x | Are there clear unserved needs | |

| Cross community convergence | 3x | Does pain appear in 2+ subreddits | |

| Your domain knowledge | 2x | Do you understand this market | |

| Technical feasibility (can you build it) | 2x | MVP buildable in 30 days or less | |

| Market size potential | 1x | Could this reach $10K+ MRR |

Score each criterion 1 to 5, multiply by weight, and total. A weighted score of 60+ (out of 90) is a strong go signal. Below 40 is a kill signal. Between 40 and 60 means you need more data. For more on kill signals, read SaaS Idea Kill Criteria.

Three Possible Verdicts

Go: Pain is frequent, signals are Tier 1 or 2, competitors have clear gaps, and the pain converges across communities. Next step: build a landing page or pre sell.

Pivot: The pain is real but your original angle is wrong. Maybe you were thinking B2C but the data points to B2B. Maybe the market is too small in your niche but large in an adjacent one. Adjust your hypothesis and run another 24 hour cycle focused on the pivot direction.

Kill: Mostly Tier 3 signals, no convergence, saturated competitive landscape with no clear gaps, or a market too small to sustain a business. Walk away. The whole point of this method is to kill bad ideas fast so you can find good ones faster. As I quoted in my cold calling failure post: "In entrepreneurship, the goal is to kill bad ideas as fast as possible."

Common Mistakes in Reddit Research

Mistake 1: Searching by solution instead of problem. Searching "scheduling tool" gives you marketing content. Searching "I spend hours scheduling" gives you pain signals. Always search in buyer language.

Mistake 2: Counting upvotes as validation. A post with 500 upvotes means 500 people found it interesting. Not 500 people who would pay for a solution. Always look at the comments for Tier 1 and Tier 2 signals.

Mistake 3: Only looking at one subreddit. The convergence test exists for a reason. One community can be an echo chamber.

Mistake 4: Confirmation bias. If you go into research hoping to validate your existing idea, you will find "evidence" for it no matter what. The remedy is to let pain categories emerge from the data instead of pre defining them.

Mistake 5: Spending more than 48 hours. The point of this method is speed. If you cannot find strong signals in 48 hours, the idea probably does not have enough demand to be worth building. Do not extend the research hoping the data will improve. It will not. Move on to the next idea.

After 48 Hours: Next Steps

If your verdict is "Go," the next steps depend on your risk tolerance and resources:

Lowest risk path: Build a landing page describing the solution, drive traffic from the subreddits where you found the pain, and measure signups. If you get 100 signups in 2 weeks, build the MVP.

Fastest path: Pre sell to 5 to 10 potential customers from the Reddit threads you analyzed. Offer a discounted lifetime deal for early adopters. If 3 people pay, you have product market fit before writing any code.

Vibe coder path: Use your Reddit research to write a detailed spec, feed it to Cursor or Lovable, and ship an MVP in a weekend. Then go back to the subreddits where you found the pain and share it. The research you did to validate the idea also gives you your go to market strategy because you already know exactly where your customers are.

For a deeper look at market sizing after validation, check TAM SAM SOM for SaaS.

Frequently Asked Questions

Can I validate a SaaS idea in less than 48 hours?

Yes, if you automate the data collection phase. Using ValidSaaS, the scraping and initial analysis for 100 posts takes under 30 minutes. That compresses the entire process to about 24 hours. The competitive analysis and cross community validation still take manual effort.

What if my target audience is not on Reddit?

Reddit covers most B2B and developer audiences well. If your target audience is not on Reddit (for example, senior citizens or rural farmers), adapt the same method to wherever they do congregate: Facebook groups, niche forums, industry publications. The framework is platform agnostic. Reddit just happens to be the richest source for SaaS and tech audiences.

How much Reddit data is enough for validation?

50 posts with full comment threads gives you directional insight. 100+ posts across 2 subreddits gives you high confidence validation. Going beyond 200 posts rarely changes the patterns. You hit diminishing returns quickly.

Does this method work for products that do not exist yet?

Absolutely. You are not searching for people asking for your specific product. You are searching for people describing the problem your product would solve. The problem exists before the solution does.

Should I reach out to people in the Reddit threads?

After completing your research, yes. But do it carefully. Reddit culture is hostile to unsolicited promotion. The best approach: leave a genuinely helpful comment (not a pitch), and if someone responds positively, follow up with a direct message asking about their experience. Never lead with your product.

How is this different from customer interviews?

Customer interviews are deep but narrow. You talk to 5 to 10 people and get detailed insights. The Reddit Research Method is broad but structured. You analyze 100+ conversations and find patterns across hundreds of people. The best approach is to use Reddit research first (to identify what to ask), then do targeted interviews (to go deep on the most promising findings).

This method was developed through analysis of 8,000+ Reddit threads and 300,000+ comments across r/SaaS, r/Entrepreneur, r/microsaas, and dozens of niche communities. It has been used to validate multiple SaaS opportunities and is the foundation behind ValidSaaS.

Try It Yourself

ValidSaaS scrapes real Reddit conversations and surfaces pain points, demand signals, and opportunities you can actually build on. Start with 2 free harvests.

Keep Reading

How to Find SaaS Ideas on Reddit: 7 Subreddits Where Users Share Real Pain Points

Stop guessing what to build. These 7 subreddits contain thousands of unfiltered complaints, feature requests, and willingness to pay signals from real users. Learn exactly how to mine them for validated SaaS ideas.

Pain Point Analysis for SaaS: The 3 Questions That Reveal High Intent Opportunities

Learn the 3 question framework for analyzing SaaS pain points that separates real demand from noise. Includes a scoring rubric, real Reddit examples, and step by step instructions for indie hackers, vibe coders, and bootstrapped founders.

SaaS Idea Kill Criteria: 7 Data Backed Signs You Should Pivot or Quit

Not every SaaS idea deserves to be built. Learn the 7 kill criteria that separate doomed projects from real opportunities, based on analysis of 8,000+ Reddit threads and dozens of failed SaaS launches.